Learn how offset commit strategies affect message delivery guarantees in 10 minutes A consumer reading from a Kafka partition may choose when to commit offsets. That decision controls whether messages are skipped or read twice after a consumer restart. What you’ll learn:Documentation Index

Fetch the complete documentation index at: https://docs.conduktor.io/llms.txt

Use this file to discover all available pages before exploring further.

- The three delivery semantics: at-most-once, at-least-once, exactly-once

- How to implement each strategy

- When to use each approach

- Best practices for production systems

Delivery semantics overview

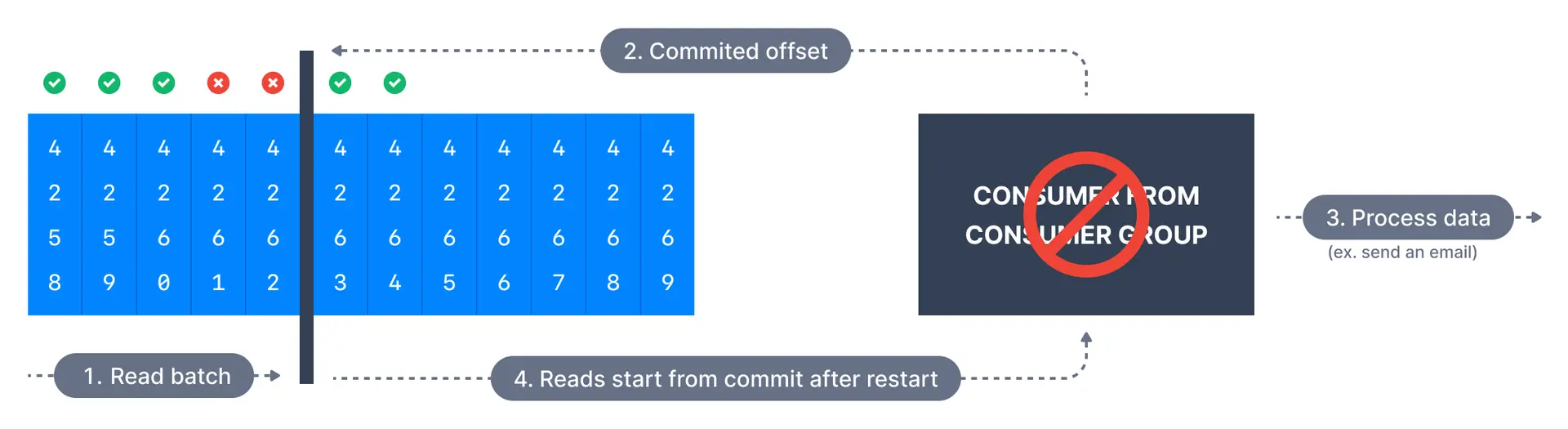

At most once delivery

In this case, offsets are committed as soon as a message batch is received after callingpoll(). If the subsequent processing fails, the message will be lost. It will not be read again as the offsets of those messages have been committed already.

- Non-critical data (metrics, logs)

- When message loss is acceptable

- When processing duplicates is more problematic than losing data

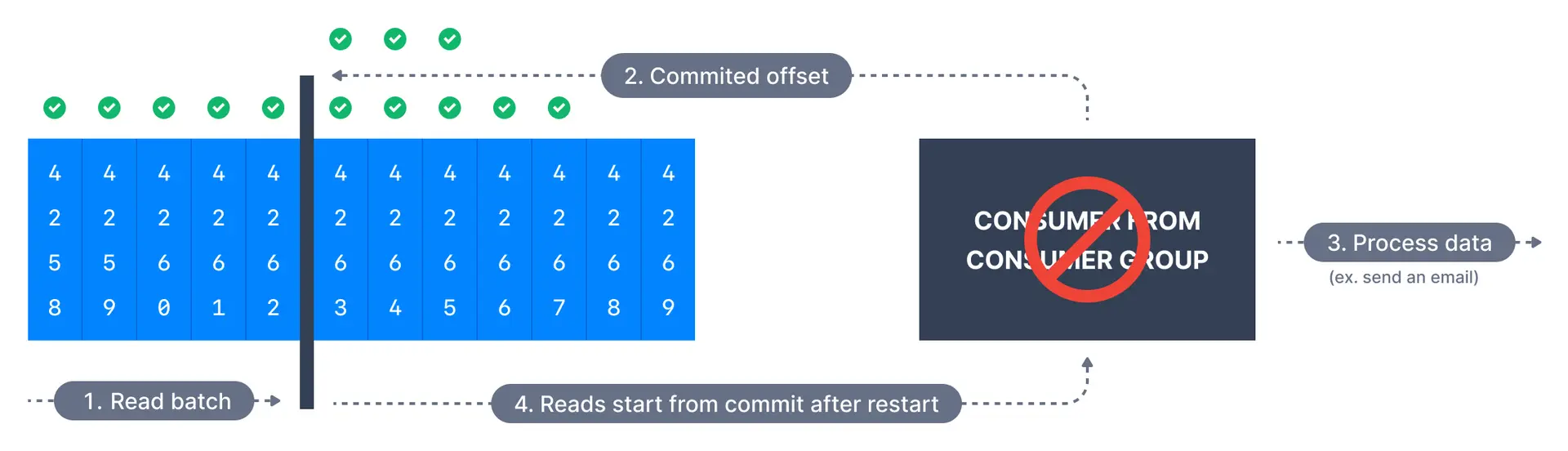

At least once delivery (usually preferred)

In at-least-once delivery, every event from the source system will reach its destination, but sometimes retries will cause duplicates. Here, offsets are committed after the message is processed.

- Most production applications

- When data loss is unacceptable

- When you can handle duplicate processing

Implement idempotent consumers

| Strategy | How it works | Example |

|---|---|---|

| Unique ID check | Track processed message IDs | Store ID in database before processing |

| Upsert operations | Use insert-or-update logic | Database upsert with message key |

| Conditional writes | Only write if not exists | Check-then-write with version check |

Exactly once delivery

Some applications require exactly-once semantics. Each message is delivered exactly once. This may be achieved in certain situations if Kafka and the consumer application cooperate:- Achievable for Kafka topic to Kafka topic workflows using the transactions API

- For Kafka topic to External System workflows, use an idempotent consumer

- Financial transactions

- Kafka Streams applications

- Critical data pipelines where duplicates cause problems

Summary comparison

| Semantic | Commits when | Risk | Complexity | Use case |

|---|---|---|---|---|

| At most once | Before processing | Data loss | Low | Metrics, logs |

| At least once | After processing | Duplicates | Low | Most applications |

| Exactly once | With transaction | None (if possible) | High | Financial, critical |

Automatic offset committing strategy

By default, consumers are configured withenable.auto.commit=true which means that offsets will be committed automatically on a schedule. This provides at-least-once delivery semantics.

Manual offset committing strategy

You can also choose to control when offsets are committed by settingenable.auto.commit=false and using the commitSync() or commitAsync() methods to manually commit offsets.

Commit strategies comparison

| Strategy | Latency | Reliability | Use case |

|---|---|---|---|

commitSync() | Higher | Guaranteed | Critical data |

commitAsync() | Lower | Best effort | High throughput |

| Batch + sync | Balanced | Guaranteed | Most applications |

See it in practice with ConduktorConduktor Console lets you monitor consumer offsets and lag per partition. Track commit progress and identify processing delays to validate your delivery semantics implementation.

Next steps

- Configure consumer settings for optimal performance

- Configure auto offset reset for new consumers

- Write a Java consumer with hands-on code