Cost control is one of the sections in the Insights dashboard. It helps platform teams identify topics consuming unnecessary storage and make informed cleanup and retention decisions.Documentation Index

Fetch the complete documentation index at: https://docs.conduktor.io/llms.txt

Use this file to discover all available pages before exploring further.

Overview

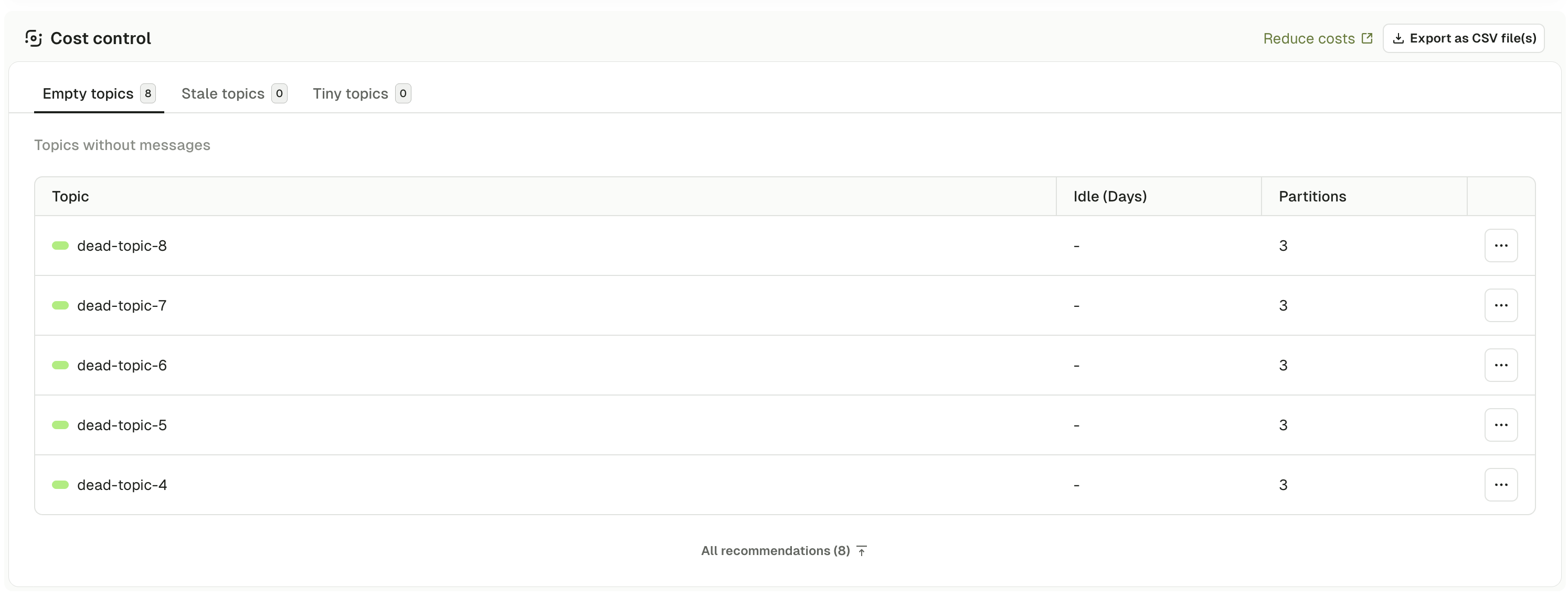

The Cost Control section displays three categories of topics that may be wasting storage:- Empty topics - topics with no messages that consume metadata overhead

- Stale topics - topics with no writes in a configured time period (default: 7+ days)

- Tiny topics - topics with minimal data volume relative to their partition overhead

What the dashboard shows

Empty topics

Topics containing zero messages. These topics have been created but never received data, or have had all messages deleted through retention policies. For each topic: name, idle time in days, and partition count. Empty topics consume cluster resources even without data: metadata storage in ZooKeeper or KRaft, memory for buffers, and often per-partition charges on cloud Kafka services.Delete an empty topic

Delete an empty topic

- topics created for abandoned projects or experiments

- temporary topics used for testing or debugging

- topics created by mistake with typos in the name

- legacy topics from deprecated applications

- topics created automatically by frameworks but never used

- topics pre-created for upcoming features or applications

- topics serving as placeholders for future data pipelines

- topics referenced in consumer group configurations awaiting data

- topics used as dead letter queues that only receive messages in error scenarios

Verify the topic is unused

- Consumer Groups tab for active consumers or unconsumed data (lag > 0)

- Monitoring tab graphs to confirm zero produce activity

- Application references in codebases and deployment configs

- Dependencies - verify no streaming jobs or external systems depend on the topic

Delete from the list

Stale topics

Topics with no write activity for 7+ days and no active consumer groups. For each topic: name, idle time in days, and partition count. Stale topics often indicate abandoned data pipelines from shut down applications, failed producers or cancelled projects. They continue consuming storage through retention policies and add operational overhead.Evaluate and handle stale topics

Evaluate and handle stale topics

- Check consumer groups - Are any consumers actively reading from the topic?

- Review consumer lag - Is there unconsumed data that applications still need?

- Verify business requirements - Is the historical data needed for compliance or analytics?

- Contact topic owners - Do the application teams still need this topic?

- Check data lineage - Do downstream systems depend on this data?

- No active consumer groups subscribed

- All consumers show zero lag (all data has been consumed)

- Application owners confirm the topic is no longer needed

- No compliance requirements for data retention

- Historical data has been archived to long-term storage

- Active consumers are still reading historical data

- Data is required for compliance or audit purposes

- Topic serves as a source of truth for event sourcing

- Downstream analytics or reporting systems depend on the data

- Occasional writes expected (seasonal or infrequent events)

Archive stale topic data before deletion

Archive stale topic data before deletion

Export the topic data

Verify the archive

Reduce retention on stale topics

Reduce retention on stale topics

Update retention settings

retention.ms- Time-based retention (e.g.,86400000for 1 day)retention.bytes- Size-based retention per partition (e.g.,1073741824for 1 GB)

Tiny topics

Topics meeting all of the following: more than 1 partition, less than 10 MB of data OR fewer than 1000 messages, and name does not end with_repartition.

For each topic: name, idle time in days, and partition count.

Topics with many partitions but little data represent inefficient resource allocation. Cloud Kafka services often charge per partition, and high partition counts increase controller overhead.

Optimize tiny topics

Optimize tiny topics

- Consolidate topics

- Recreate with fewer partitions

- Accept current state

- Identify candidates from the same application with similar schemas

- Design consolidated topic structure with category or type fields

- Update producers and consumers accordingly

Our recommendations

Prevent cost control issues through governance and automated ownership tracking rather than manual remediation. Use Self-service for ownership and governance - it brings a GitOps approach to topic lifecycle management where applications represent streaming apps or data pipelines and dictate ownership of Kafka resources. Benefits for cost control:- Automatic ownership tracking - topics created through Self-service have clear ownership from creation

- Policy enforcement - application instance policies can prevent creation of tiny topics by enforcing minimum data volume or maximum partition count requirements

- Naming conventions - policies enforce consistent naming that includes environment and ownership information

- Lifecycle management - clear application ownership reduces abandoned topics through better tracking and accountability

Troubleshoot

Why does an empty topic show recent activity in monitoring?

Why does an empty topic show recent activity in monitoring?

Can I recover a topic after deletion?

Can I recover a topic after deletion?

How do I identify topics created by internal Kafka processes?

How do I identify topics created by internal Kafka processes?

__consumer_offsets (consumer group offsets), __transaction_state (transactional state), _schemas (Schema Registry metadata) and topics matching .*-changelog or .*-repartition (Kafka Streams internals).Never delete __consumer_offsets or __transaction_state as this causes cluster-wide failures. Only delete Kafka Streams topics when the application is permanently decommissioned and all instances are shut down.Why does reducing retention not free up disk space immediately?

Why does reducing retention not free up disk space immediately?

How do I handle topics with unknown ownership?

How do I handle topics with unknown ownership?

scheduled-for-deletion label with a future date (for example, 30 days) and send announcements asking owners to identify themselves. After the grace period expires with no claims, proceed with deletion.Implement a policy requiring application labels at topic creation time. Use RBAC permissions to enforce labeling as a prerequisite for topic creation.What if a stale topic suddenly receives writes after months of inactivity?

What if a stale topic suddenly receives writes after months of inactivity?

usage-pattern: seasonal or usage-pattern: emergency label, and document the activity pattern. Do not delete topics showing this pattern without understanding the business requirement.